The global technology behemoth, Google, issued a formal apology this Tuesday following the inadvertent distribution of a deeply offensive push notification to a segment of its app users. The notification, which contained the egregious N-word, was related to the recent controversy that unfolded at the BAFTA Film Awards, sending shockwaves across the entertainment industry and prompting a critical examination of algorithmic oversight and content moderation.

In an era where digital communication is instantaneous and far-reaching, the incident underscored the profound challenges tech companies face in managing vast oceans of data and ensuring sensitive language is handled with the utmost care. Google swiftly moved to clarify that the inclusion of the slur was not, as some initial reports suggested, a direct consequence of an artificial intelligence system malfunctioning. Instead, the company attributed the error to a failure in its established safety filters. According to Google, these filters, designed to prevent the dissemination of harmful content, erroneously “recognized a euphemism for an offensive term on several web pages, and accidentally applied the offensive term to the notification text.” This explanation highlights the intricate and often ambiguous nature of natural language processing (NLP), where algorithms grapple with context, connotation, and the evolving landscape of language, including the intentional use of euphemisms to bypass detection.

While Google confirmed that only a “very small subset” of app users who receive push notifications were affected, the gravity of the term used meant its impact resonated far beyond the number of recipients. For those who received it, particularly individuals from communities historically targeted by such language, the notification represented a stark and unwelcome intrusion of racial prejudice into their digital space. It served as a painful reminder of the persistent struggle against systemic bias, even in the seemingly neutral realm of technology. The incident sparked immediate concern among privacy advocates and civil rights organizations, who have long cautioned against the potential for algorithms to perpetuate or amplify societal prejudices, often without human intent.

The specific notification in question directed users to an article from The Hollywood Reporter titled, “How the Tourette’s Fallout Unfolded at the BAFTA Film Awards.” However, Google’s automatically generated accompanying text tragically appended the phrase “See more on,” followed by the unedited N-word. This critical oversight transformed what should have been an informational alert into a source of distress and outrage, forcing a renewed discussion about the ethical responsibilities of platforms that aggregate and disseminate news content. The incident also brought into sharp focus the imperative for rigorous testing and continuous refinement of algorithmic tools, especially when dealing with content that carries such significant historical and social weight.

A Google spokesperson promptly addressed the burgeoning controversy, stating, “We’re deeply sorry for this mistake. We’ve removed the offensive notification and are working to prevent this from happening again.” This apology, while necessary, initiates a broader conversation about the mechanisms put in place to prevent such occurrences and the extent to which tech giants can truly guarantee the safety and inclusivity of their platforms. The challenge lies not only in identifying and removing offensive terms but also in understanding the nuanced ways in which they are used, referenced, and alluded to, a task that often confounds even the most sophisticated AI systems.

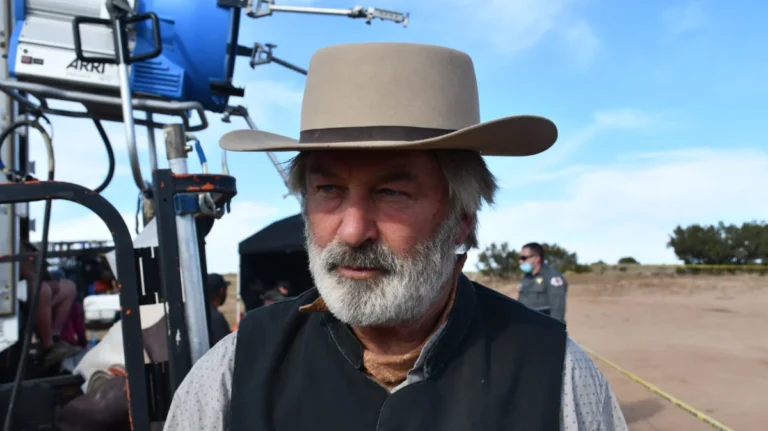

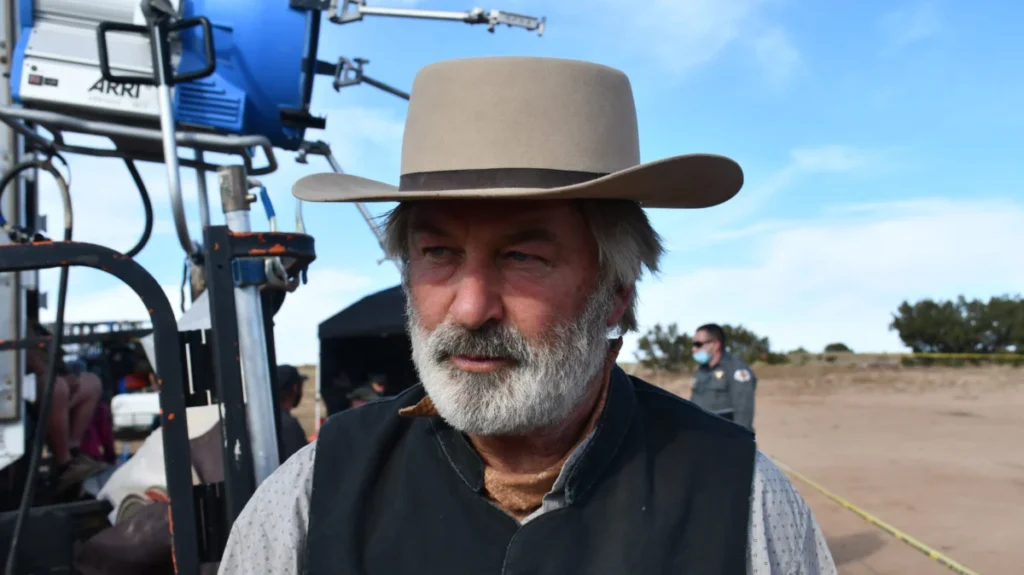

The unfortunate notification arrived amidst the ongoing fallout from the BAFTA Film Awards ceremony, which had taken place just days prior on Sunday. The core incident at the awards show involved Tourette’s syndrome activist John Davidson, who involuntarily uttered the N-word while Hollywood luminaries Michael B. Jordan and Delroy Lindo were presenting on stage. This moment, captured during a pre-taped segment, was subsequently aired without any editorial intervention, igniting a fervent debate about broadcast ethics, disability awareness, and racial sensitivity.

John Davidson’s involuntary vocalization, known as coprolalia, is a challenging symptom experienced by a small percentage of individuals with Tourette’s syndrome. Coprolalia manifests as the involuntary utterance of socially inappropriate words or phrases, including profanities or slurs, often to the profound distress of the individual experiencing the tic. While medical experts emphasize that these vocalizations are not intentional expressions of prejudice or hatred, their public occurrence, especially with a word as charged as the N-word, inevitably creates complex and painful situations. The incident at the BAFTAs thrust Davidson’s condition into the global spotlight, prompting both empathy for his involuntary tic and profound concern over the impact of the word itself.

The decision by BAFTA to air the pre-taped segment unedited raised serious questions about the production’s editorial judgment and its responsibility to both the audience and the individuals involved. Critics argued that the broadcast could have caused significant harm to viewers, particularly those from Black communities, and potentially re-traumatized them. Furthermore, it put presenters Michael B. Jordan and Delroy Lindo in an incredibly uncomfortable and publicly exposed position. The incident quickly became a flashpoint, highlighting the delicate balance between authentic representation, the demands of live (or nearly live) television, and the paramount need for cultural sensitivity and safeguarding. Many within the industry questioned whether the pursuit of unvarnished reality should ever supersede the duty to protect vulnerable individuals and communities from offensive content.

In response to the widespread criticism and the palpable unease within the industry, BAFTA leadership swiftly addressed the situation. In a letter dispatched to BAFTA members on Tuesday, the organization’s Chair, Sara Putt, and CEO, Jane Millichip, directly confronted the controversy. Their joint statement acknowledged the profound harm caused by the incident, stating their intention to “acknowledge the harm this has caused, address what happened and apologise to all.” This direct and unequivocal apology underscored the organization’s recognition of the severity of the incident and its commitment to transparency. Putt and Millichip further announced that a “comprehensive review” was immediately underway. Such a review is expected to delve into all aspects of the broadcast production, editing processes, content moderation protocols, and perhaps even broader diversity and inclusion strategies within the institution. It signals an intent to not only understand what went wrong but to implement systemic changes to prevent similar occurrences in the future.

The BAFTA incident, and Google’s subsequent algorithmic misstep, are not isolated events but rather symptoms of larger, ongoing conversations within both the entertainment and tech sectors about accountability, representation, and the ethical management of powerful platforms. For an organization like BAFTA, which has faced historical scrutiny regarding its diversity and inclusion records (famously highlighted by campaigns like #BAFTAsSoWhite), this incident presented a critical test of its commitment to fostering an equitable and respectful environment. The organization’s response, including the promised comprehensive review, indicates an understanding that addressing such controversies requires more than just an apology; it demands systemic change and a renewed dedication to its stated values.

Similarly, for Google, a company that plays an indispensable role in how billions of people access information, the offensive notification served as a stark reminder of the immense power and responsibility it wields. The incident underscores the ongoing challenge of building AI and algorithmic systems that are not only efficient but also ethically sound and culturally competent. It reignites discussions about the need for diverse teams in tech development, robust human oversight in content moderation, and proactive measures to anticipate and mitigate the potential for algorithms to propagate harmful biases. As technology continues to permeate every facet of daily life, the expectation for platforms to safeguard users from offensive content, whether human-generated or algorithmically amplified, will only intensify, demanding continuous vigilance and innovation in the pursuit of a more inclusive digital landscape.